Evolution of GPUs

The history of GPUs (Graphics processing unit) graphics cards is a fascinating one, as it has seen tremendous growth and development since the early days of computing. In this article, we will explore the evolution of graphics cards, from their early beginnings to the present day.

Early Graphics Cards

The earliest graphics cards were simple devices that were used to display text and basic graphics on computer screens. These cards were often integrated into the computer’s motherboard and had limited capabilities.

The first standalone graphics card was the IBM Monochrome Display Adapter (MDA), which was introduced in 1981. The MDA was capable of displaying text in 80 columns by 25 rows and was designed primarily for business use.

In 1984, IBM introduced the Color Graphics Adapter (CGA), which was capable of displaying up to 16 colors at a resolution of 320 x 200 pixels. The CGA was a significant improvement over the MDA and was popular among early PC gamers.

In 1987, IBM introduced the Video Graphics Array (VGA), which was capable of displaying up to 256 colors at a resolution of 640 x 480 pixels. The VGA was a major breakthrough in graphics card technology and remained the standard for many years.

Introduction of 3D Graphics

In the early 1990s, 3D graphics began to gain popularity in video games and other applications. This led to the development of dedicated 3D graphics cards, which were designed to handle the complex calculations required for 3D graphics rendering.

One of the first 3D graphics cards was the 3Dfx Voodoo, which was introduced in 1996. The Voodoo was capable of rendering 3D graphics at a resolution of 640 x 480 pixels and was a significant improvement over previous graphics cards.

In 1999, Nvidia introduced the GeForce 256, which was the first graphics card to use hardware acceleration for 3D graphics rendering. The GeForce 256 was a major breakthrough in graphics card technology and set the stage for future developments.

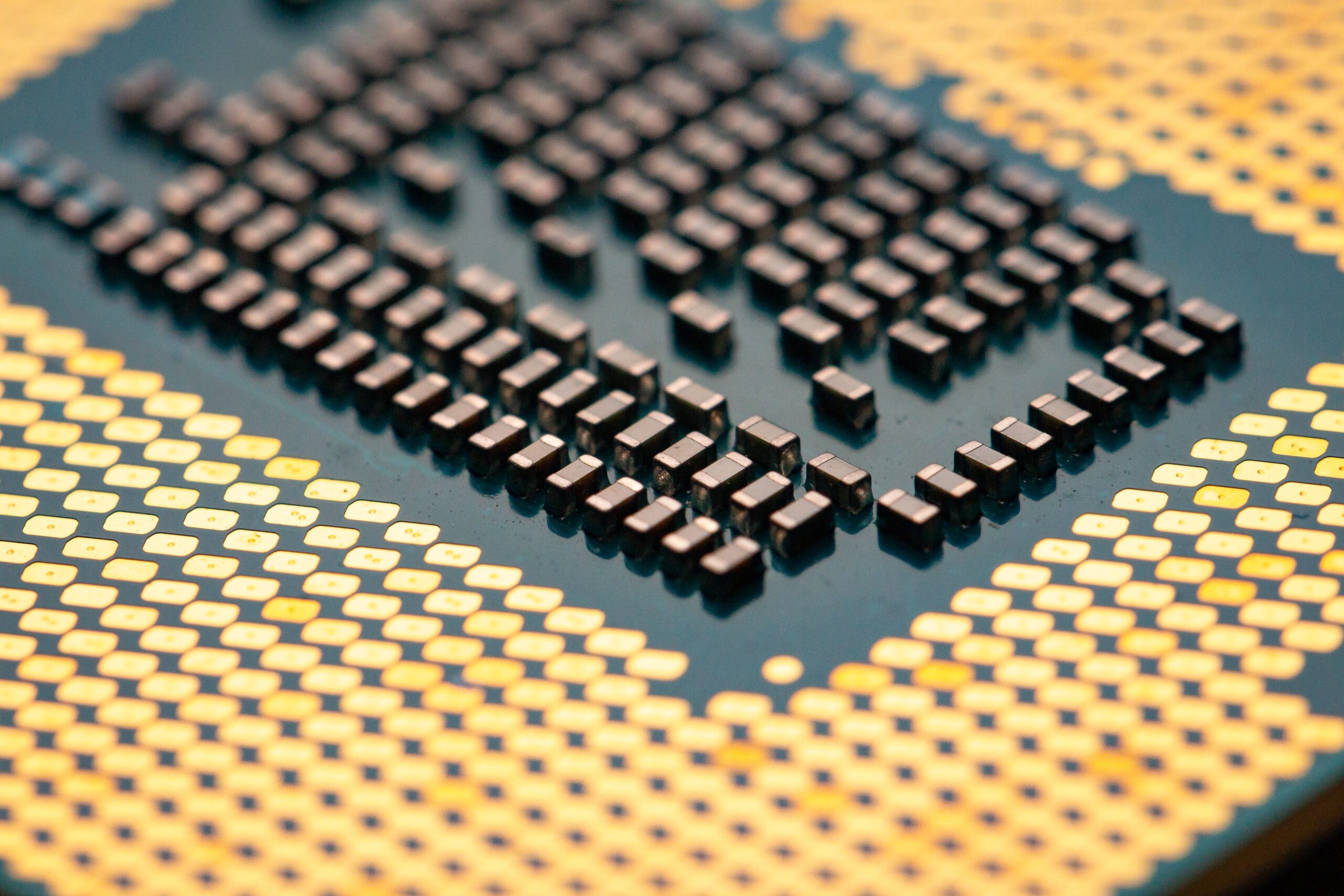

Modern Graphics Cards

Today, modern graphics cards are incredibly powerful and are capable of rendering 3D graphics at extremely high resolutions and frame rates. Graphics cards are used in a wide range of applications, from video games to scientific simulations to machine learning.

Modern graphics cards use a variety of technologies to achieve their impressive performance, including advanced GPUs, high-speed memory, and sophisticated cooling systems. Graphics cards are also optimized for specific applications, such as gaming, video editing, and scientific computing.

In recent years, graphics cards have become increasingly important in the field of cryptocurrency mining. Graphics cards are used to perform the complex calculations required to mine cryptocurrencies such as Bitcoin and Ethereum.

Future of Graphics Cards

The future of graphics cards is an exciting one, as it is likely that they will continue to become more powerful and sophisticated. Some of the developments we can expect to see in the coming years include:

-

Ray tracing technology: Ray tracing is a rendering technique that simulates the way light behaves in the real world. Ray tracing requires a lot of computational power, but it can produce incredibly realistic graphics.

-

Artificial intelligence: Graphics cards are already being used for machine learning applications, and this trend is likely to continue. We can expect to see graphics cards that are specifically designed for AI applications in the future.

-

Virtual and augmented reality: VR and AR are rapidly growing markets, and graphics cards will play a crucial role in their development. We can expect to see graphics cards that are optimized for VR and AR applications in the coming years.

Conclusion

The evolution of graphics cards has been a remarkable one, and it is likely that they will continue to play a vital role in the development of computing and other technologies. Graphics cards have come a long way from their early beginnings as simple devices